One of the most interesting rabbit holes to explore on Twitter is #plottertwitter. Under this hashtag, you’ll see a variety of computer-generated artwork and videos of plotting machines that sound like they’re being controlled by ancient floppy drive motors.

(Update 2022: There was a linked Tweet here of a cool video of a pen plotter, but it’s since been deleted. :( )

There’s an interesting little community that’s grown up around the resurgence of these plotting machines. The most popular plotting machine of #plottertwitter seems to be the AxiDraw, but there are people still using old HP plotters from the ’80s as well as a few brave DIY’ers that have built their own machines. There’s something immediately captivating about these machines. The chirping of servo motors and the tactile sound you hear as pen hits paper, not to mention the cool visuals, produce an oddly satisfying video experience.

Of course, the appeal of #plottertwitter isn’t just the machines themselves, but the artwork that they plot out. Plotter art is a sub-genre of generative art. Many people would also include plotting art and generative art in the larger trend of creative coding, which has seemingly become more popular recently (for example, with the rise of Glitch). I’d recommend Artnome’s “Why Love Generative Art?” and Sher Minn Chong’s History of Computer Art series to get a good overview of the history of generative art, which dates back to the 1960s.

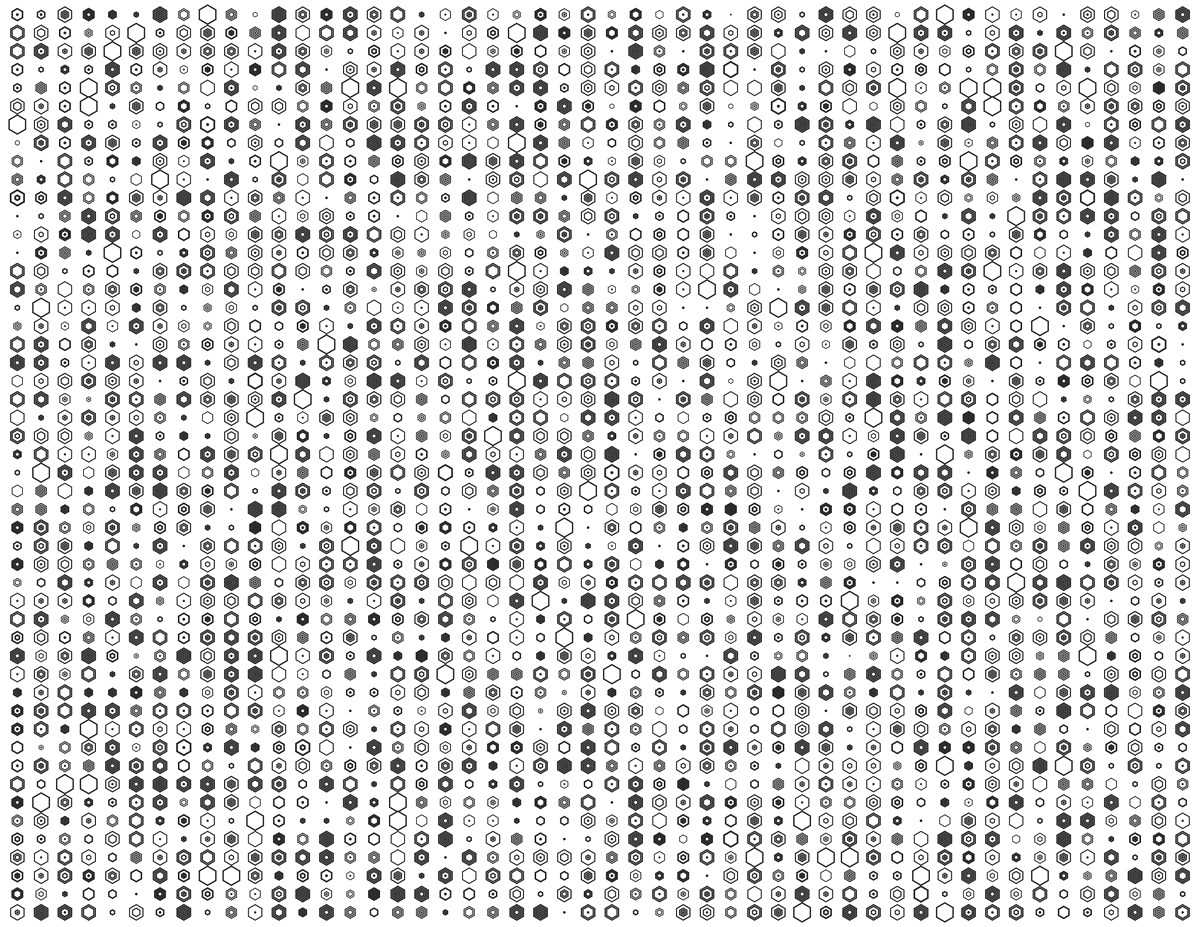

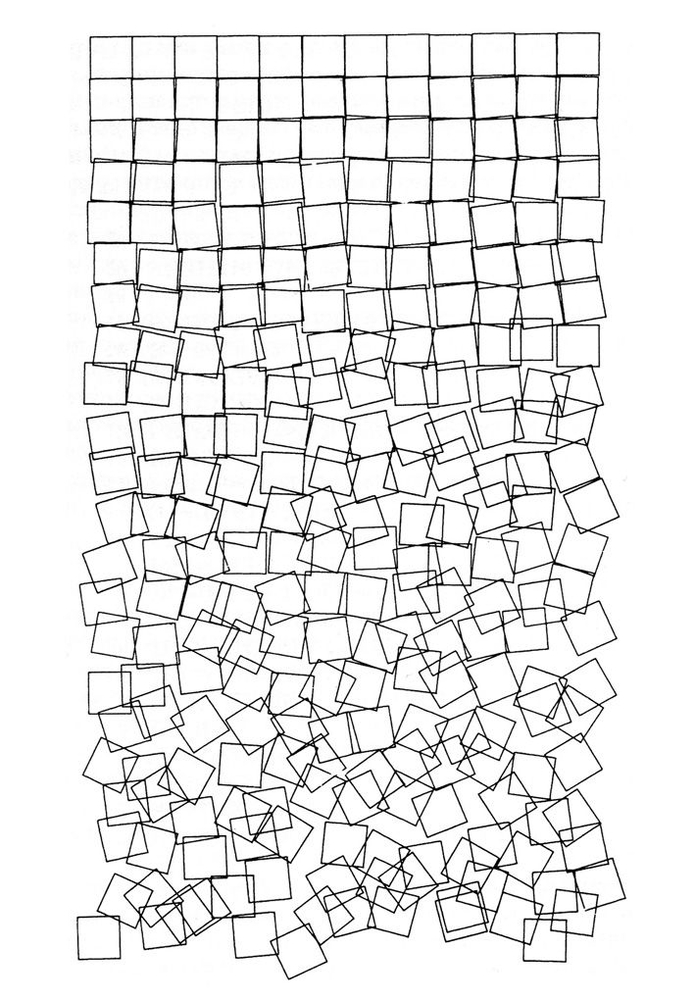

An Example of Early Generative ArtSchotter - Georg Nees, 1968, Photo Credit: Artnome

I’ve been keeping an eye on the #plottertwitter ecosystem for a while, but I felt like, not having a plotter, that this type of creative coding was out-of-reach.

I’m sure we’ve all had an experience like this before: “I could be an amazing photographer but for my lack of an expensive camera”, “I could produce a cool podcast but for my lack of a complicated audio system”, etc. I didn’t want to invest in a plotter, which go for several hundred dollars, until I’d demonstrated to myself that generative art was more than a passing curiosity.

So, in late January, when I was looking for a side-project to dig into, I decided to make generative coding a practice to try out. I’ve found that, personally, practices that can be systematized as habits tend to “stick” well.

My plan was to do some type of “generative doodle” about every other day. I chose the phrase “generative doodle” intentionally: generative to denote that the work should involve some type of algorithmic creativity and doodle to lower the stakes and set conservative expectations.

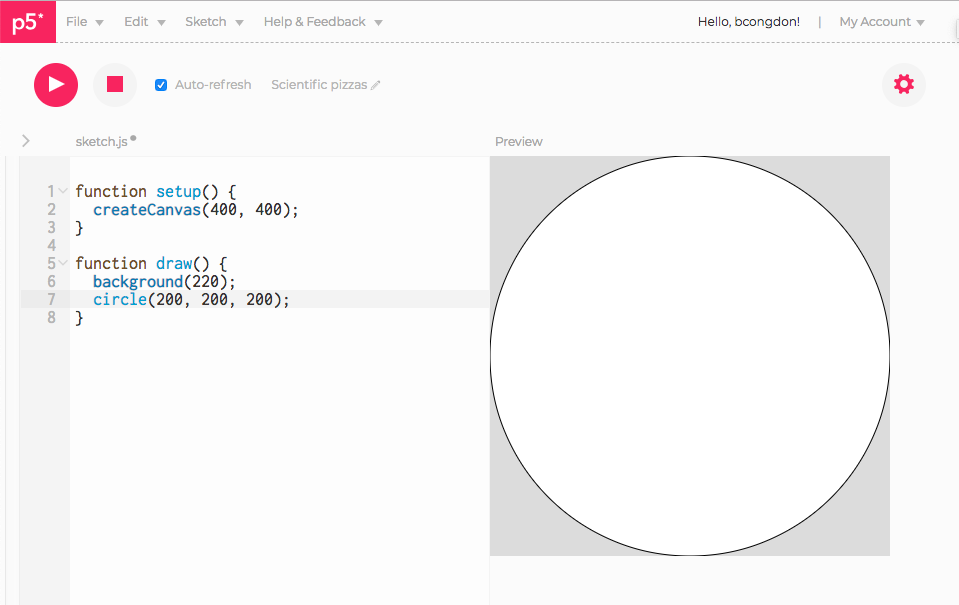

For the first of weeks, I had about as simple a workflow as one could have. I used the P5.js web editor. It’s basic, but it gives you everything you need: a place to write code and a live-refreshing view of your output. The P5.js docs have a good number of examples and, because it’s written in Javascript, there isn’t much syntactic overhead.

P5.js Web Editor

P5.js is the Javascript-flavored spiritual successor of Processing, which played an interesting role in the history of creative coding.1 For example, Processing inspired the Arduino IDE. Processing has its own IDE and a syntax that feels like a mixture of C and Javascript. You can find a wealth of examples of generative art created using Processing online. P5.js is decoupled from an IDE, so it can be used as a generic graphics library for the web. It also has some cool non-visual features, like audio processing and mobile motion controls.

Eventually, I outgrew the P5.js web editor and developed a more stable workflow. The P5.js web editor is great to get started, but it has a variety of deficiencies. First, it runs your code directly in the browser without any way to “stop” your code. So, if you mess up and create an infinite loop or runaway recursion, you have to kill the browser tab and start over. The editor has saving built in, but I still lost work this way. Second, P5.js is great, but it’s also written in Javascript. Not to bash JS, but… I prefer the structure of a statically typed language.

My current setup uses Michael Fogleman’s gg graphics library for Go. The API is simple and intuitive. Unfortunately, it only produces rasterized images, so I’ll have to switch back to P5.js (or something like draw2d) if I want to plot my doodles on a pen plotter. But, I’ll cross that bridge when I get to it.

The underlying concepts between most of the graphics libraries I’ve interacted with (P5.js, gg, line2d, etc.) are mostly transferrable. Once you get comfortable with manipulating 2d-primitives — lines, polygons, circles — and the control structures used to draw them — line width, fill style, view transformations — you can move between graphics libraries without a whole lot of relearning.

// Setting up a drawing and creating a circle with `gg`

func main() {

dc := gg.NewContext(400, 400)

dc.SetRGB(220./255, 220./255, 220./255)

dc.Clear()

dc.SetRGB(0, 0, 0)

dc.SetLineWidth(1)

dc.DrawCircle(200, 200, 200)

dc.Stroke()

dc.SavePNG("output.png")

}

Once I used gg for a few days, I made another improvement to my workflow. The

process for creating a generative image is a cycle of (1) write some code, (2)

run your program and look at the output image, (3) make tweaks and repeat. The

simplest approach is to write your output to the same file after each run (i.e.

output.png), but this doesn’t maintain a history of past outputs. I

ported Inconvergent’s file-naming python

module to Go and use that now. Each time I run my generative scripts, the output

is a unique date-sortable file. Many of the doodles I’ve worked on (especially

those involving fractals) necessitate parameter tweaking, so keeping each

version of a drawing helps to tell if I’m getting closer to the look I want with

each run.

A fun byproduct of keeping all your outputs is that you can stitch together all the images you’ve generated into a cool animated GIF:

Progress Animation for Feb 23, 2019

Suffice it to say, I’ve really enjoyed my experience diving into generative art. I’ve noticed a variety of interesting knock-on effects:

- I’ve become less reluctant to work with coordinate systems. I’ll admit that I have a strange phobia: I’ve often felt there’s something ”unclean” in working with coordinates in graphics. This is probably because I started working on web development when “responsive design” was the big buzzword, so everything had to scale to arbitrary screen sizes. Acclimating myself to coordinate systems (and graphics programming more generally) has opened up some doors. I’m working on another yet unreleased project that was more-or-less predicated on becoming comfortable with manual graphics programming.

- I’ve started to dig up and use some of my old math knowledge that’d previously gone unused. Want to determine how to arrange triangles so that they tesselate well? Pull out a sheet of graph paper and do some trigonometry. Want to arrange a bunch of circles so that they’re adjacent to each other? More math. However, this isn’t “plug and chug” Math-course style math, but rather is something more tangible and fun.

- It’s been an outlet to satisfy some lingering curiosities I’ve had. One of my doodles used a basic form of spacial hashing. Another explored space-filling curves. There are lots of applications for genetic algorithms, flocking algorithms, and constraint-solving algorithms that I’d like to explore at some point. Inconvergent has been doing some cool experiments with neural networks that seem promising.

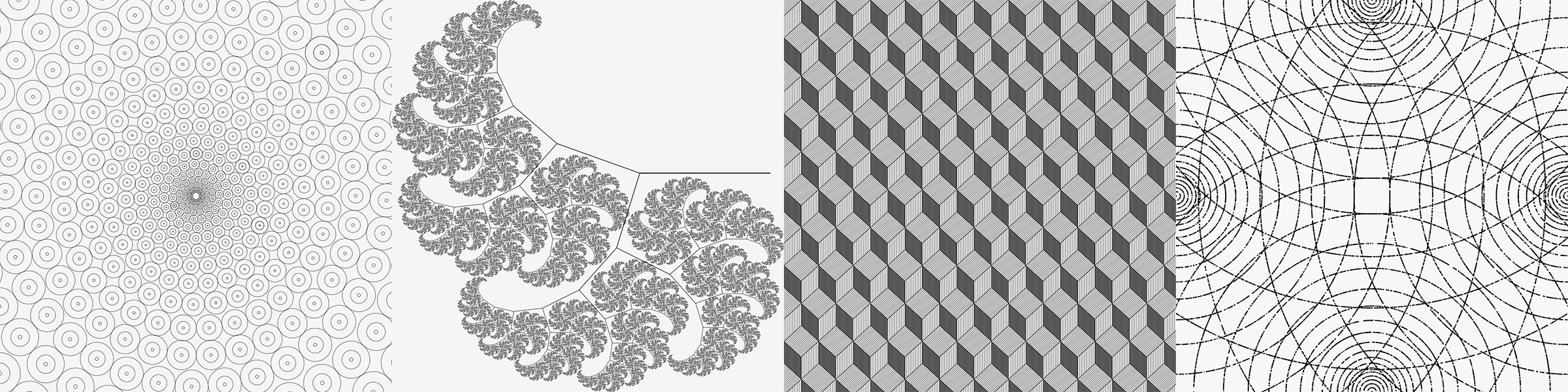

I’m also rather excited about further extensions of this skillset. So far, my generative doodles have been intentionally simplistic. I’ve constrained myself to spend at most ~1 hour on each, I start from scratch between each doodle, and I’ve been only using black line strokes. These limitations were super useful in making sure that I stuck to my practice of creating a doodle regularly, but I can sense that the limitations I’ve imposed on myself mean that this habit is starting to reach its point of diminishing returns (a concept that I wrote about last year). I’ll also admit that I do feel a bit guilty about spamming my Twitter timeline with black-and-white geometric patterns. 😅

Looking forward, I might try to dig deeper into some more complicated generative algorithms and experiment with a varied color palette. I’m also looking forward to experimenting with an actual plotter machine.

Reflecting more broadly, this project has shown me something that I’ve felt intuitively for a while: there’s value in having a tangible output for a project. There’s something deeply satisfying about holding the output of a project in your hand (like my GitTrophy 3d-prints), touching an app that you’ve written, or showing someone a data visualization that you’ve created. When working on backend or library codebases, it can sometimes feel like you’re buried under too many layers of abstraction. Sometimes, it’s fun to peel some of those layers back and make something tangible.

Additional Resources

- DrawingBots.net has a great list of tools. I’ve joined its discord community and have found it to be a great source of inspiration.

- Inconvergent’s essay series on generative art is a great primer for getting a sense of what is possible.

- Michael Fogleman’s “Pen Plotter Programming: The Basics” is a good jumping off point for plotting-style art.

-

I’d highly reccomend reading “A Modern Prometheus”, written by Processing’s creators, for a detailed account of Processing’s development. ↩︎